In a globally distributed enterprise, networking and server monitoring are not back-office utilities. They are strategic capabilities that determine whether digital services remain stable, secure, and responsive under constant pressure. A company with thousands of employees across multiple regions faces shifting traffic patterns, overlapping time zones, hybrid infrastructure, and an expanding portfolio of applications competing for compute and bandwidth. In that environment, visibility is not a luxury. It is operational oxygen.

Effective monitoring begins with a clear understanding of performance baselines. When engineering teams know what normal looks like across CPU utilization, memory consumption, latency, packet loss, and application response times, deviations become immediately actionable. Proactive monitoring, rather than reactive troubleshooting, is the defining difference between resilient systems and recurring outages. As Fortra explains in its overview of proactive network monitoring, small issues identified early can be resolved before they cascade into major disruptions. In a global environment, this matters exponentially. A latency spike in one region can ripple into authentication delays, API failures, and degraded customer experiences elsewhere.

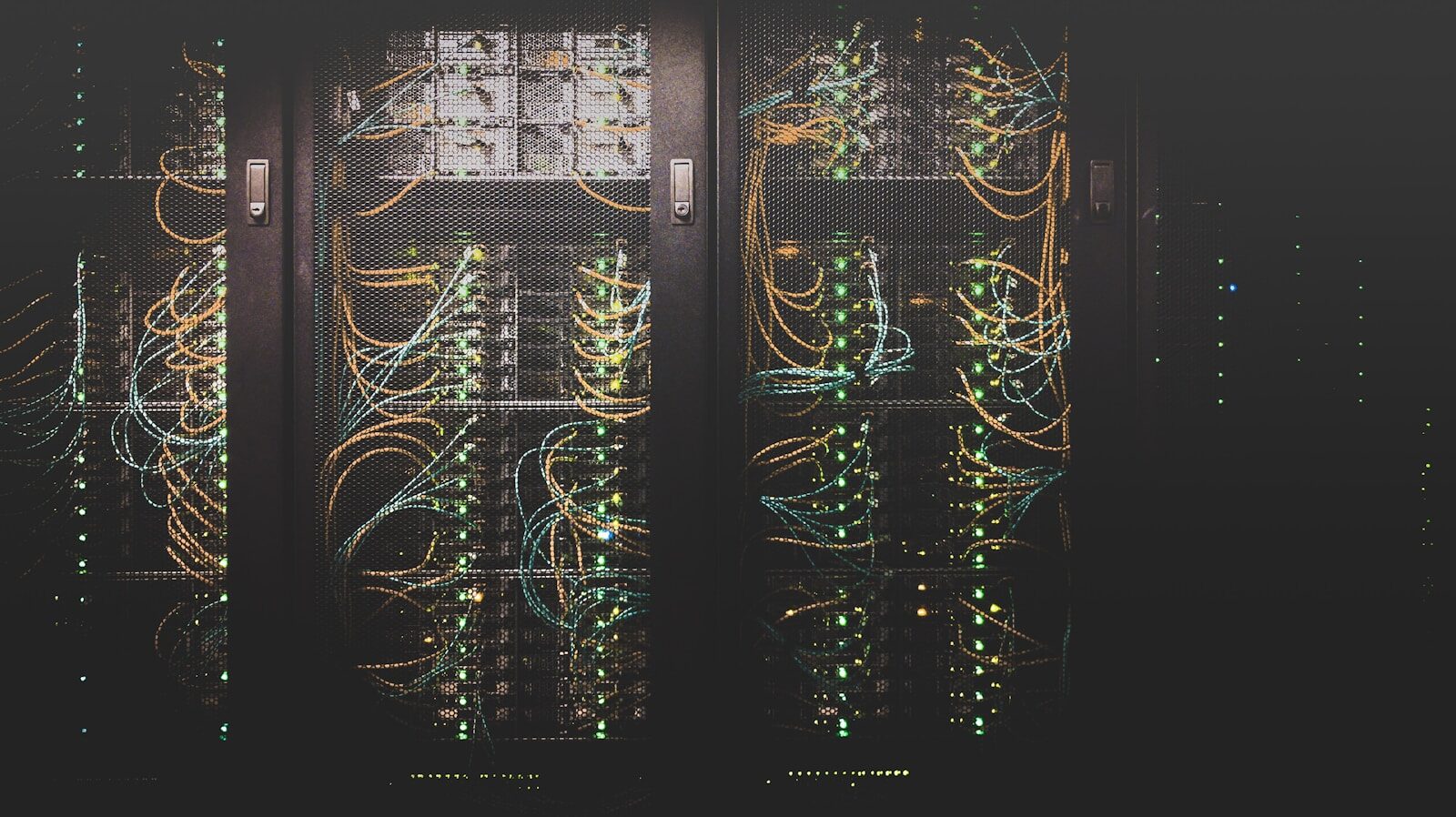

Modern monitoring strategies rely on layered visibility. Infrastructure monitoring tracks physical and virtual servers, storage, and network devices. Application performance monitoring evaluates service dependencies and transaction paths. Log aggregation and analysis surface patterns that human operators would otherwise miss. Real-time dashboards provide operational awareness, while automated alerts route anomalies to the right teams before service-level agreements are breached. When implemented well, this ecosystem functions less like a collection of tools and more like a centralized nervous system.

Security and operational resilience are inseparable from monitoring discipline. Even smaller organizations depend heavily on reliable and well-managed networks, as noted in Forbes’ discussion of network risk exposure. At enterprise scale, the attack surface grows with every endpoint, cloud workload, and remote connection. Continuous monitoring supports early detection of anomalies such as unusual login behavior, unexpected traffic flows, or sudden configuration changes. It also reinforces accountability through audit trails and log retention policies that support compliance and forensic investigation.

However, monitoring at scale is not simply about deploying more dashboards. It requires architectural intent. Metrics must align with business priorities. Alert thresholds must be tuned to reduce noise and prevent alert fatigue. Data must be centralized enough to provide coherence, yet segmented enough to preserve security boundaries. Leadership must also recognize that monitoring data is a strategic asset. It informs capacity planning, investment decisions, vendor evaluations, and digital transformation initiatives.

In many ways, a global network resembles the planetary defense grid in recent science fiction franchises. Individual nodes appear independent, but the stability of the whole depends on synchronized awareness and rapid response. Without coordination, small disruptions escalate into systemic failures. With disciplined monitoring and intelligent automation, the same infrastructure becomes adaptive and resilient.

For senior IT leaders, the lesson is clear. Networking and server monitoring are not tactical line items. They are foundational to business continuity, cybersecurity maturity, and customer trust. The organizations that treat monitoring as a strategic function rather than a reactive chore position themselves to scale confidently, innovate responsibly, and withstand the unexpected.